AI image generation on your own computer - Part 1

Use Stable Diffusion text-to-image model to generate images, without having to use a service on the web

AI image generation on your own computer

Introduction

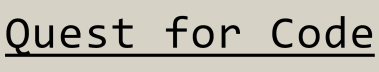

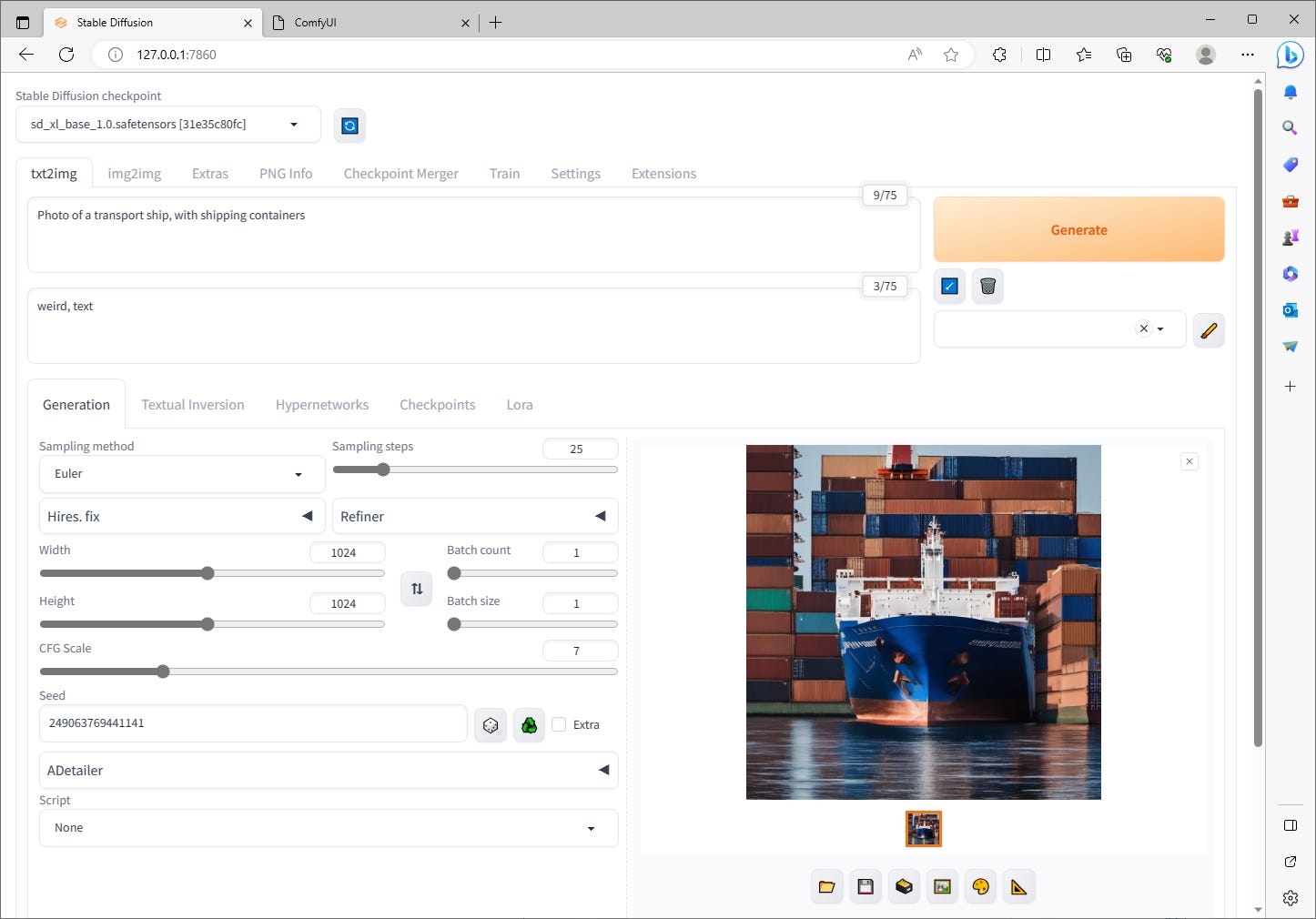

AI generated content is taking over the web. You can no longer be sure which is AI generated, and which is not. Is the main image of my post Containers - Part 1, AI generated or is it a photo? If you look closely at it, you may notice that something is a bit off. The containers at the back seem a bit weird. Well, that is, because it is an AI generated image. One that was generated in a bit of a hurry, without too much quality requirements. Or is my avatar on this platform an artists view of me, or is it generated by AI?

I generated the container image using Stable Diffusion XL 1.0. I didn’t use any web service, since it is really simple to setup Stable Diffusion to generate images on your own computer. The only limiting factor here is that you should have an NVIDIA RTX GPU with at least 8GB memory. I’ve seen that AMD GPUs work too, but of that I have no own experience.

Tools

I like command prompts or terminals, but for something as complex as AI image generation can get, I prefer to have a good UI. I’ve tried two of them:

AUTOMATIC1111’s Stable Diffusion web UI

ComfyUI

I will refer to AUTOMATIC1111’s Stable Diffusion web UI as A1111 from now on for easier readability.

Both of the user interfaces are web based. You start a service on your computer, and then navigate to the service’s address with your browser. I feel like A1111 is easier to get going with, but also in my opinion not as flexible as ComfyUI. When I first tried AI image generation I used A1111, but I have now moved on to ComfyUI, because I feel like I’m more in control of the whole process.

Let’s take a look at the two user interfaces.

A1111

A1111 is a bit more traditional UI, with a tabular layout. For a technical person its quite easy to start trying out things, without too much of a learning curve. Of course to be able to make really nice images, you need to know what you are doing, and what tools to use for what purposes.

ComfyUI

ComfyUI looks far more intimidating at first, because it is basically just a blank canvas where you need to start adding nodes to. These nodes have input and output, and they connect to other nodes. These kind of UIs are sometimes called no-code tools: you don’t need to write lines of code, but instead you add these nodes that data flows through. This isn’t really coding, but there are similarities.

Something to consider

Text-to-image generation uses random noise during the generation process. If you want to generate the same picture, you need to use the same seed for the randomizer.

The two user interfaces don’t produce same images, even with same parameters and randomizer seed, because of the way the differences in the way they produce random noise. So if you first try one of these UIs, and then want to move on to the other one, please note that you might not be able to get the exact same image.

Coming up

In the future parts of this series, you will learn more about Stable Diffusion, their latest model XL 1.0, and ComfyUI. We’ll take a look at other models as well, and something called LoRAs (Low-Rank Adaptation) models to fine-tune models easily. What is fine-tuning? Subscribe, and you’ll learn.

You’ll also learn the basics how to setup ComfyUI workflows and, how to make some advanced workflows. For example we’ll try to generate the same artificial person in different settings, or in completely different art styles. We’ll even try to make some pixel art, with that person in it.